Original titel was "On Gamma handling in Unreal.", but I now changed it to more accurately represent the topic.

--Present Han

For a bit of background information you might want to read this article first:

https://gamedevelopment.tutsplus.com/ar ... edev-14466

Unreal content and rendering was initially designed for *not* performing any gamma correction on output, instead the gamma correction was preapplied to the texture resources themself. The table below shows a rough estimate on the gamma values used on the textures.

Surfaces1.6-1.9

Meshes2.0

Tiles/Sprites1.65

Editor Actor Sprites2.2

Most certainly they descided to render this incorrect way at the expense of poor lighting, to avoid heavy artifacts which would otherwise be introduced by limitations of that time (Thief 1/2 is a good example for the "correct" way around that time, which introduced heavy artifacts). Everything in rendering was build around and/or counter issues with the gamma incorrect rendering. Also in case of mesh skins, they used some heavy contrast enrichment (sigmoidal is my current educated guess).

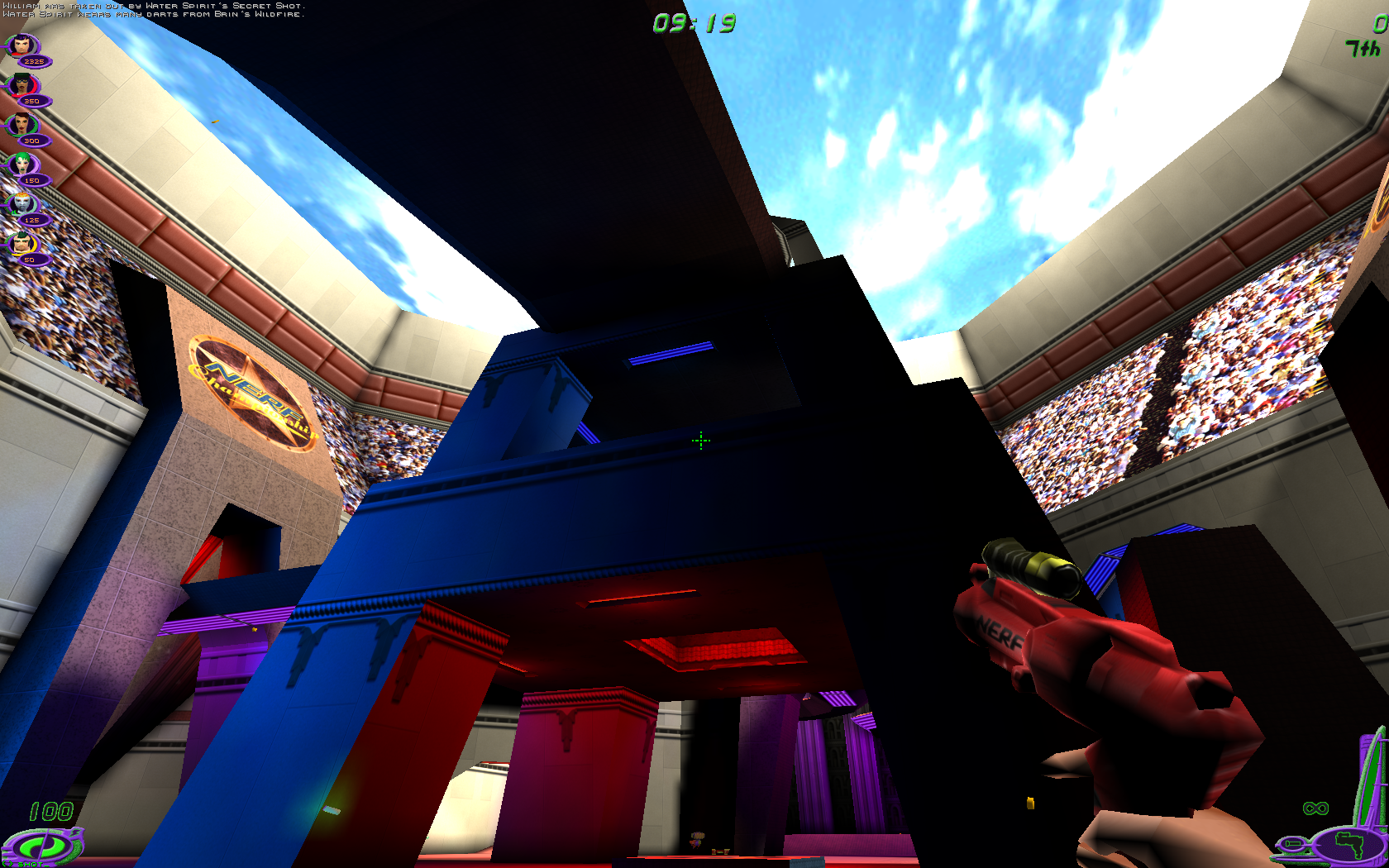

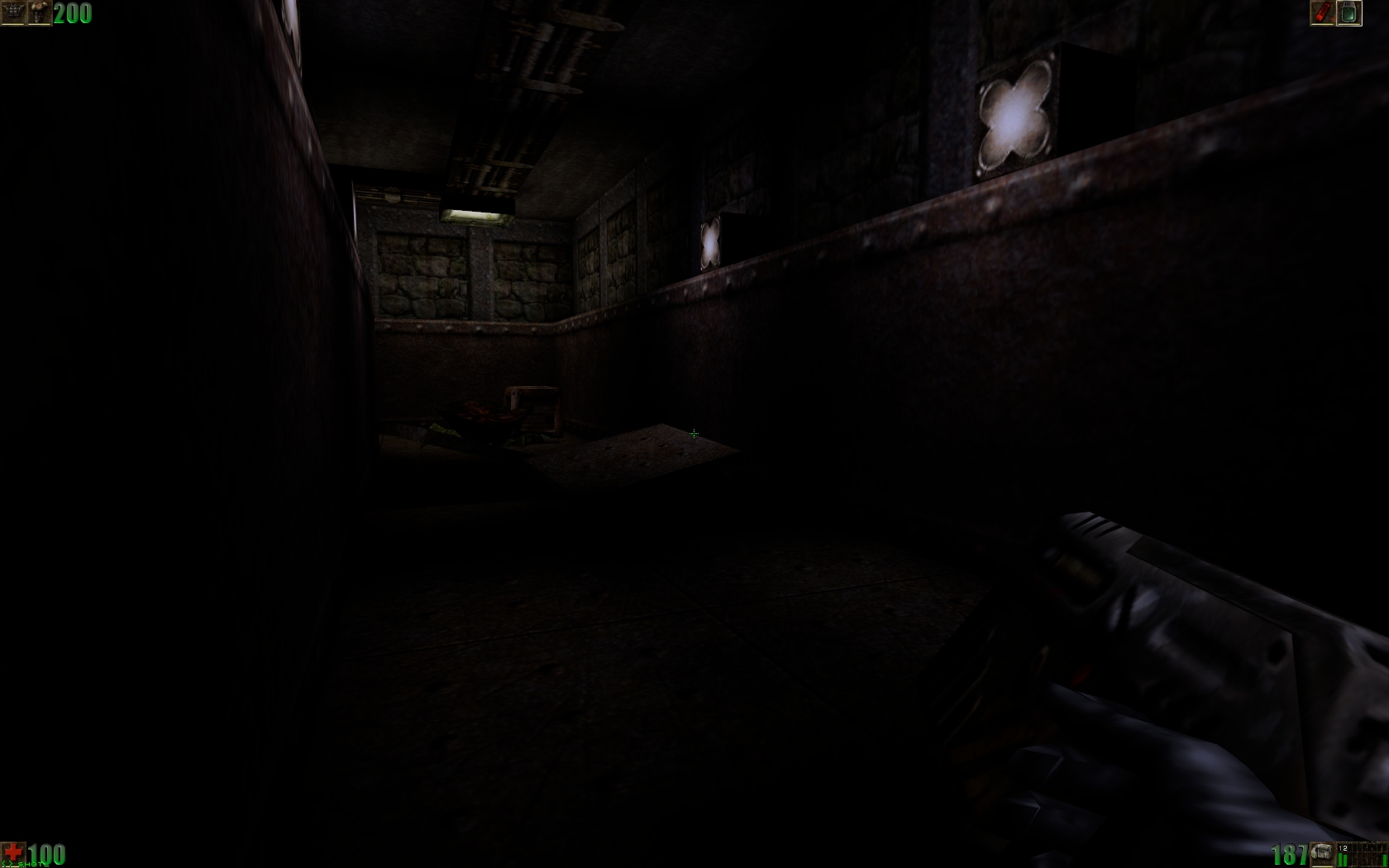

Probably the best RenDev and version to judge how unreal intially looked like is Software Rendering on version 200. It shows some very well balanced lightning.

I made some tests to mimic the rendering behaviour of the 200er SoftDrv version, if you have Unreal 226b installed and want to give it a try: http://coding.hanfling.de/NoGamma226b-20150328.zip (If there is some interesst I can make an also 227i build of it, but it's mostly a demo for surface lighting, not intended for productive use).

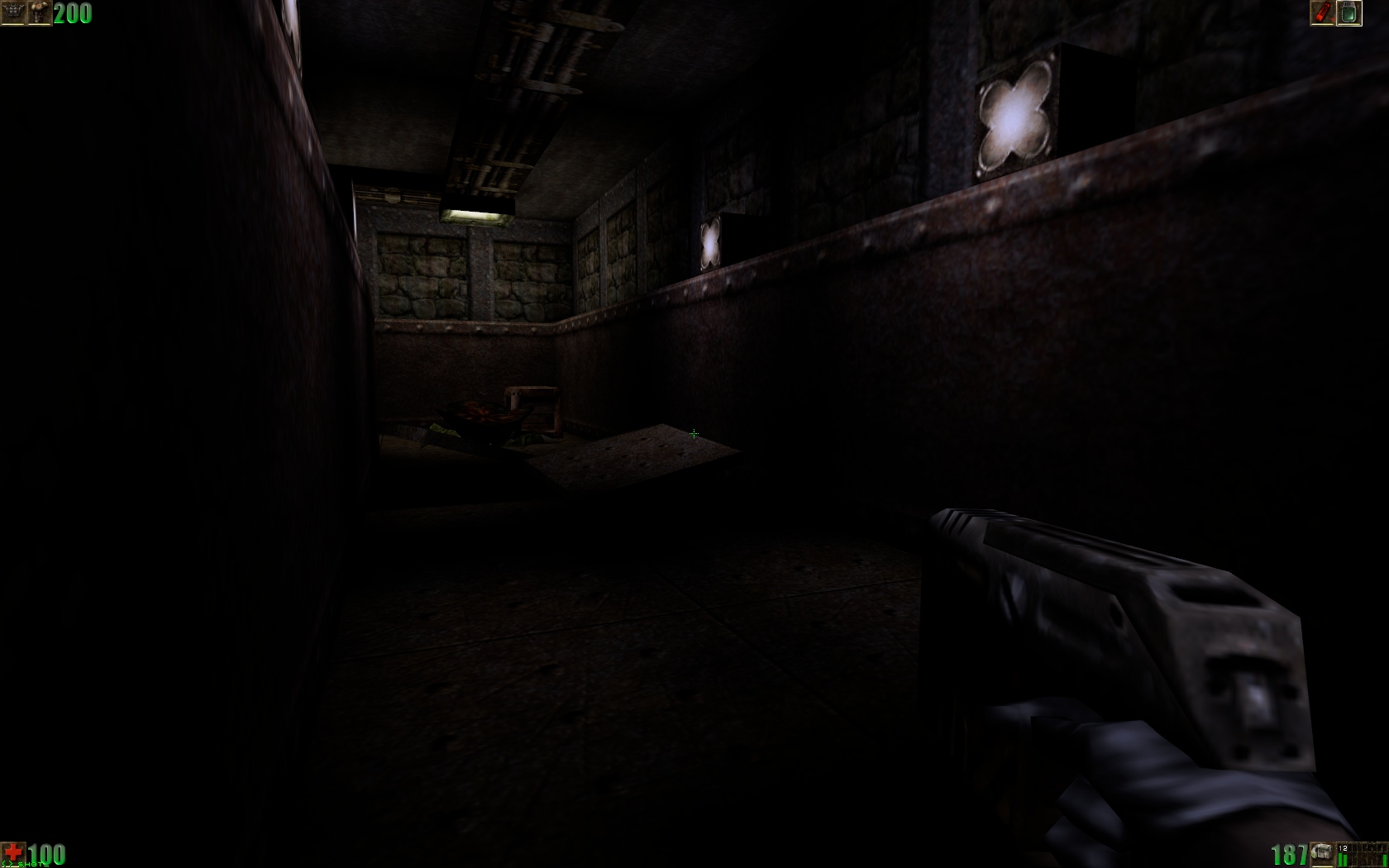

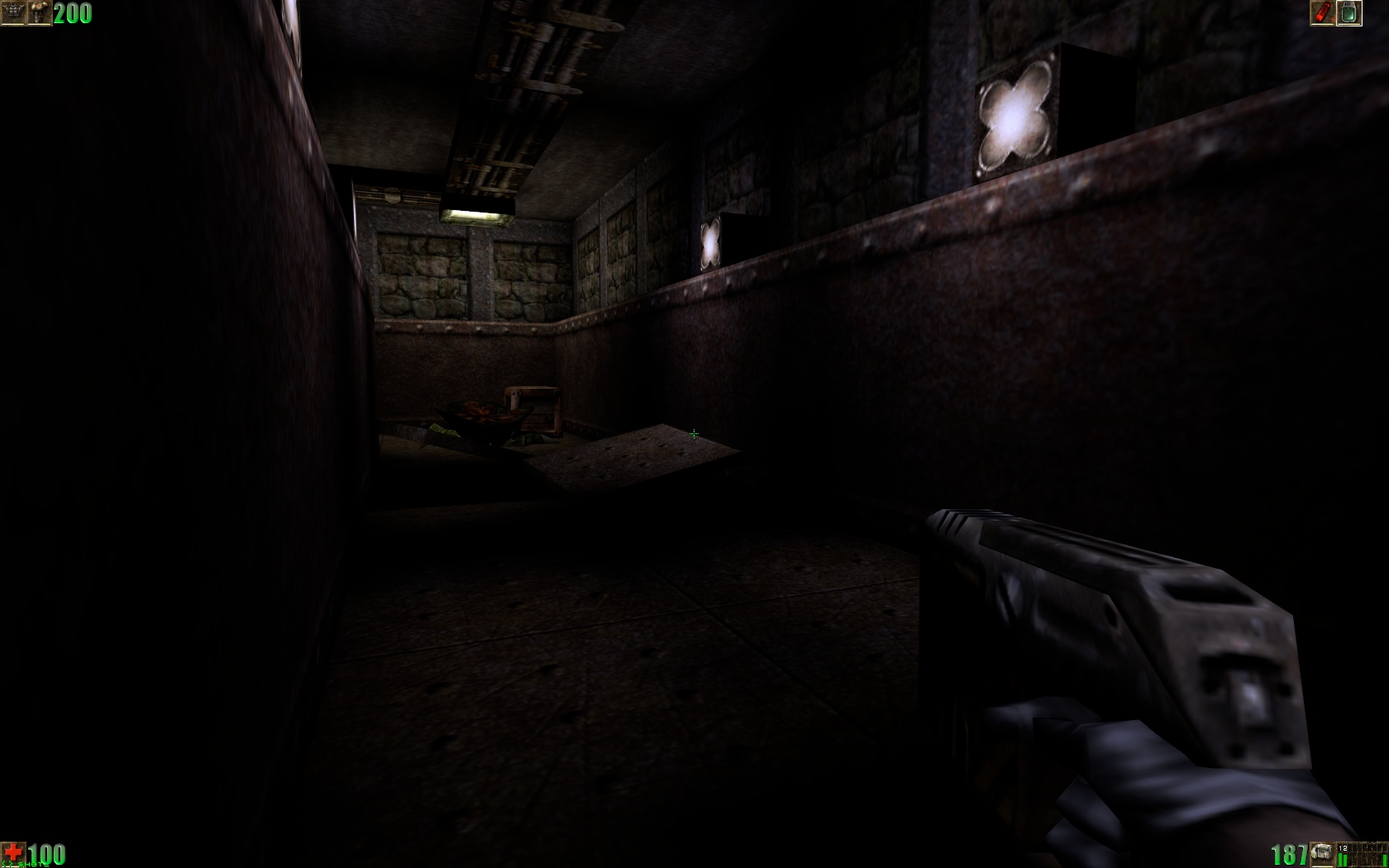

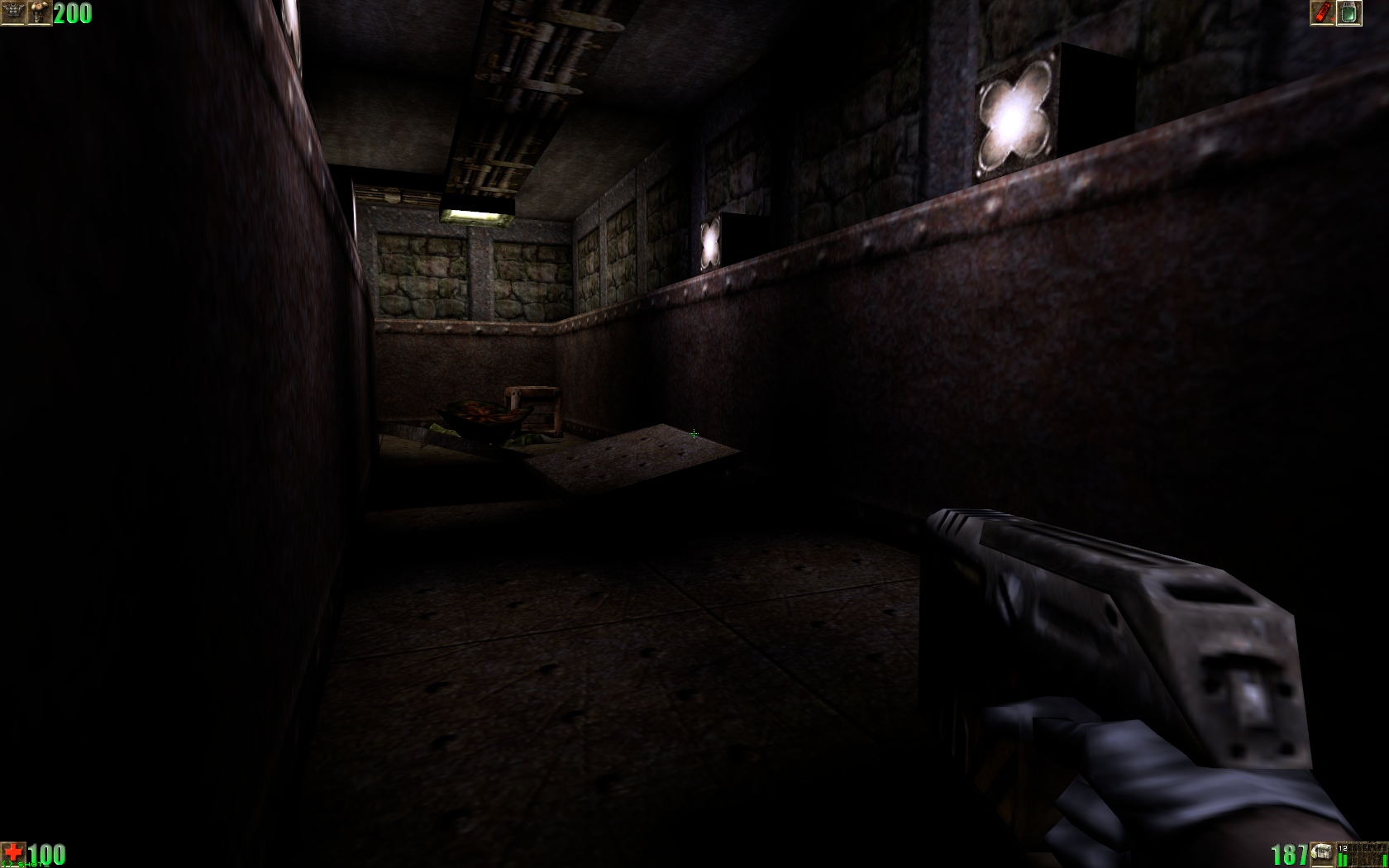

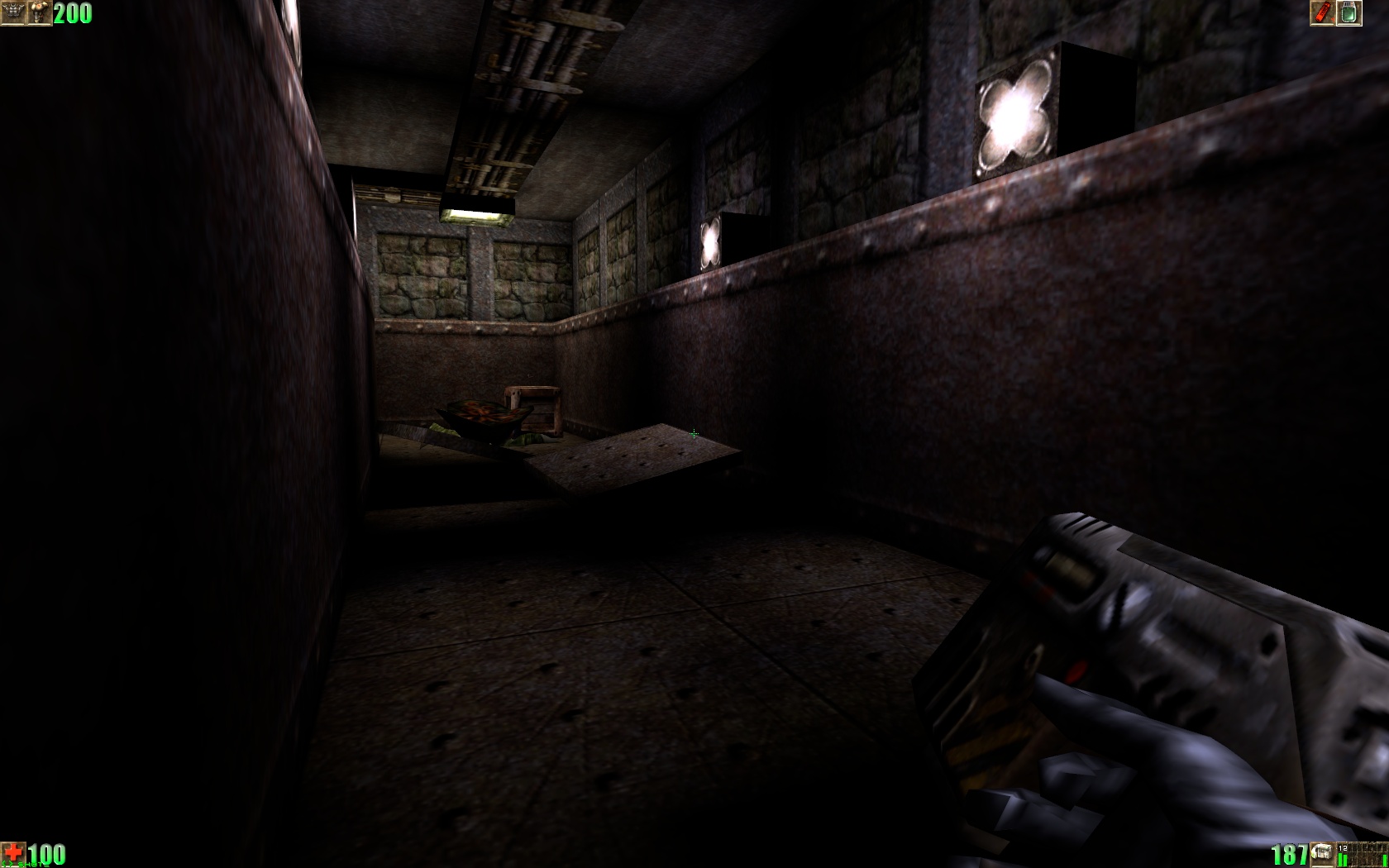

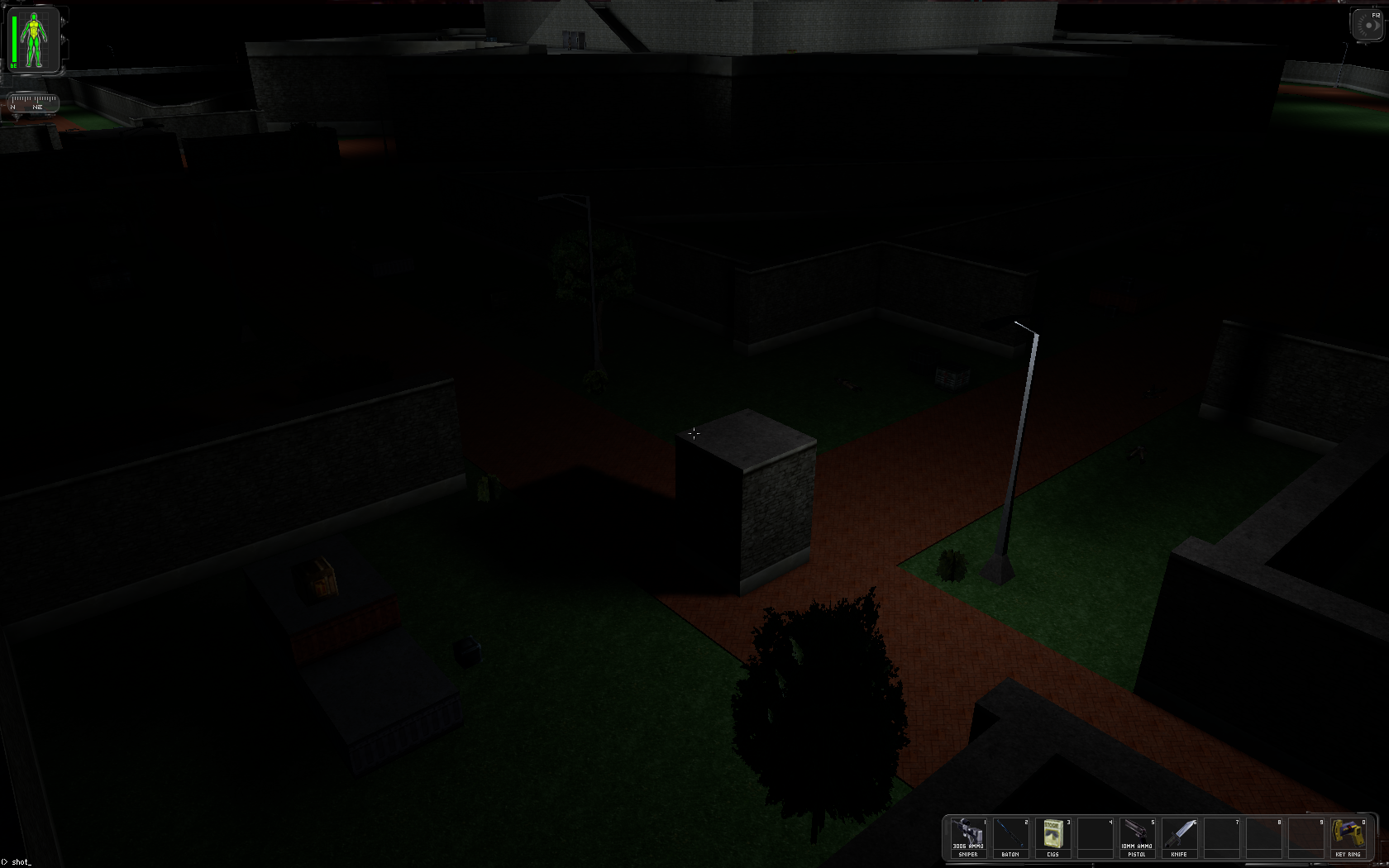

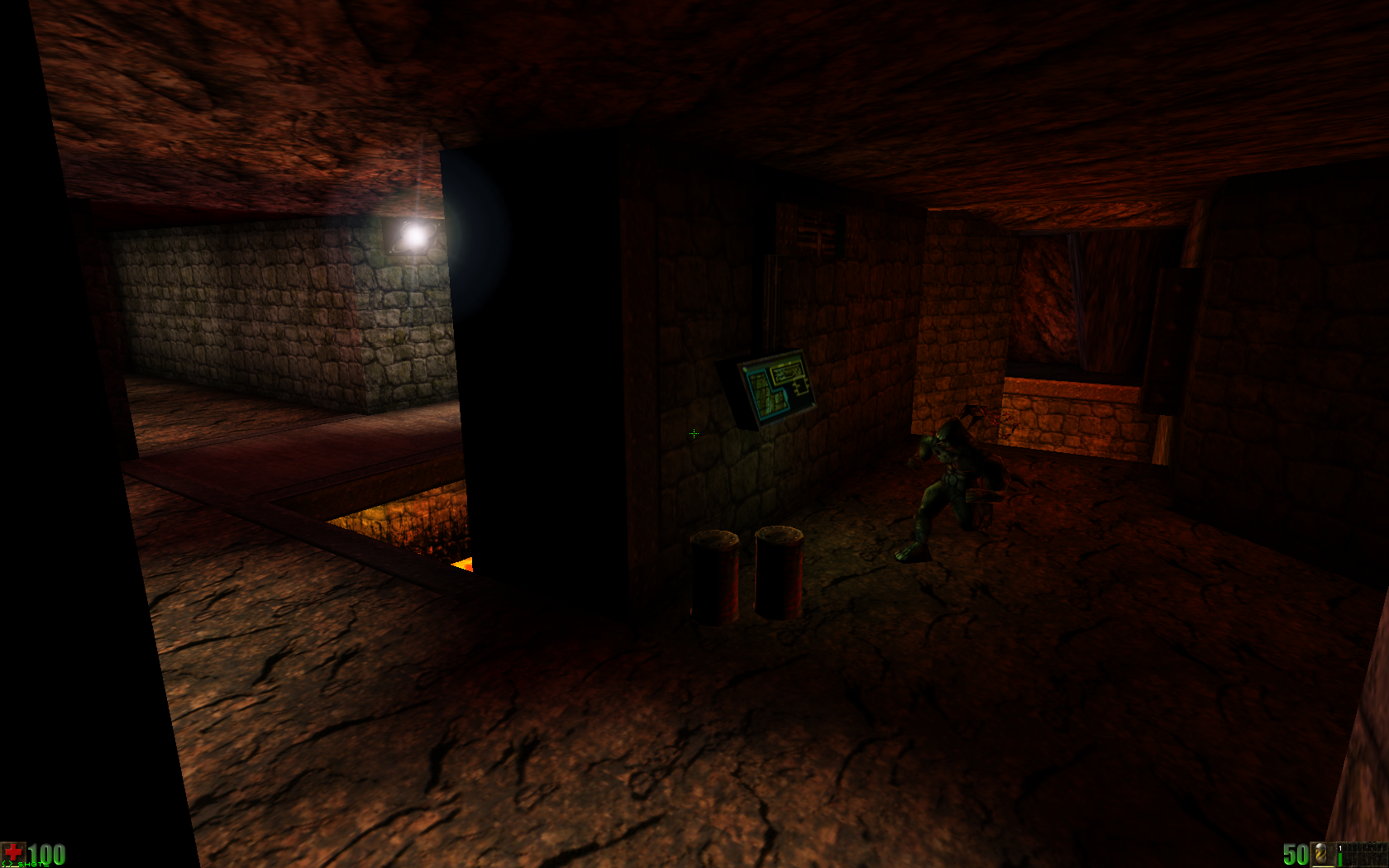

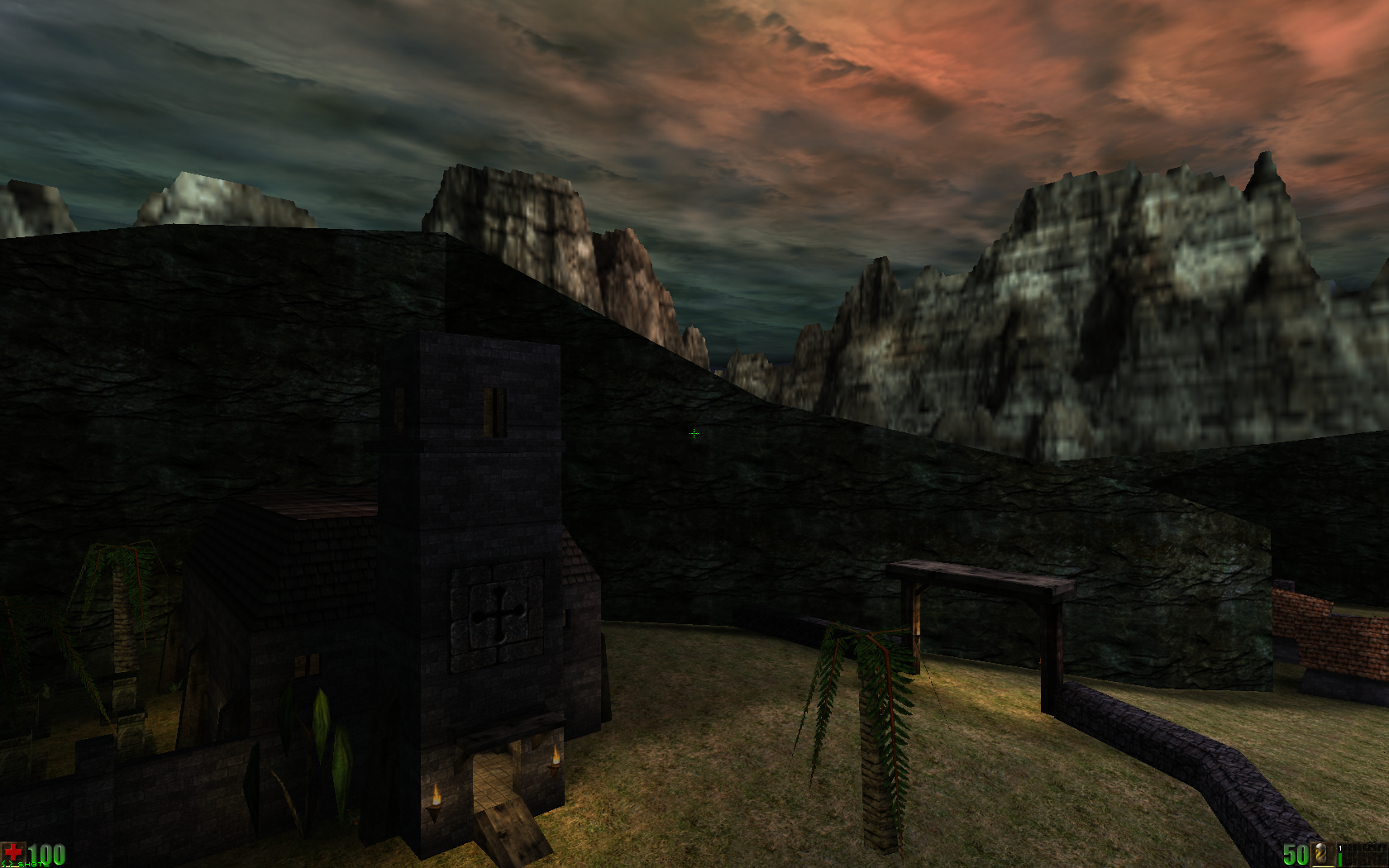

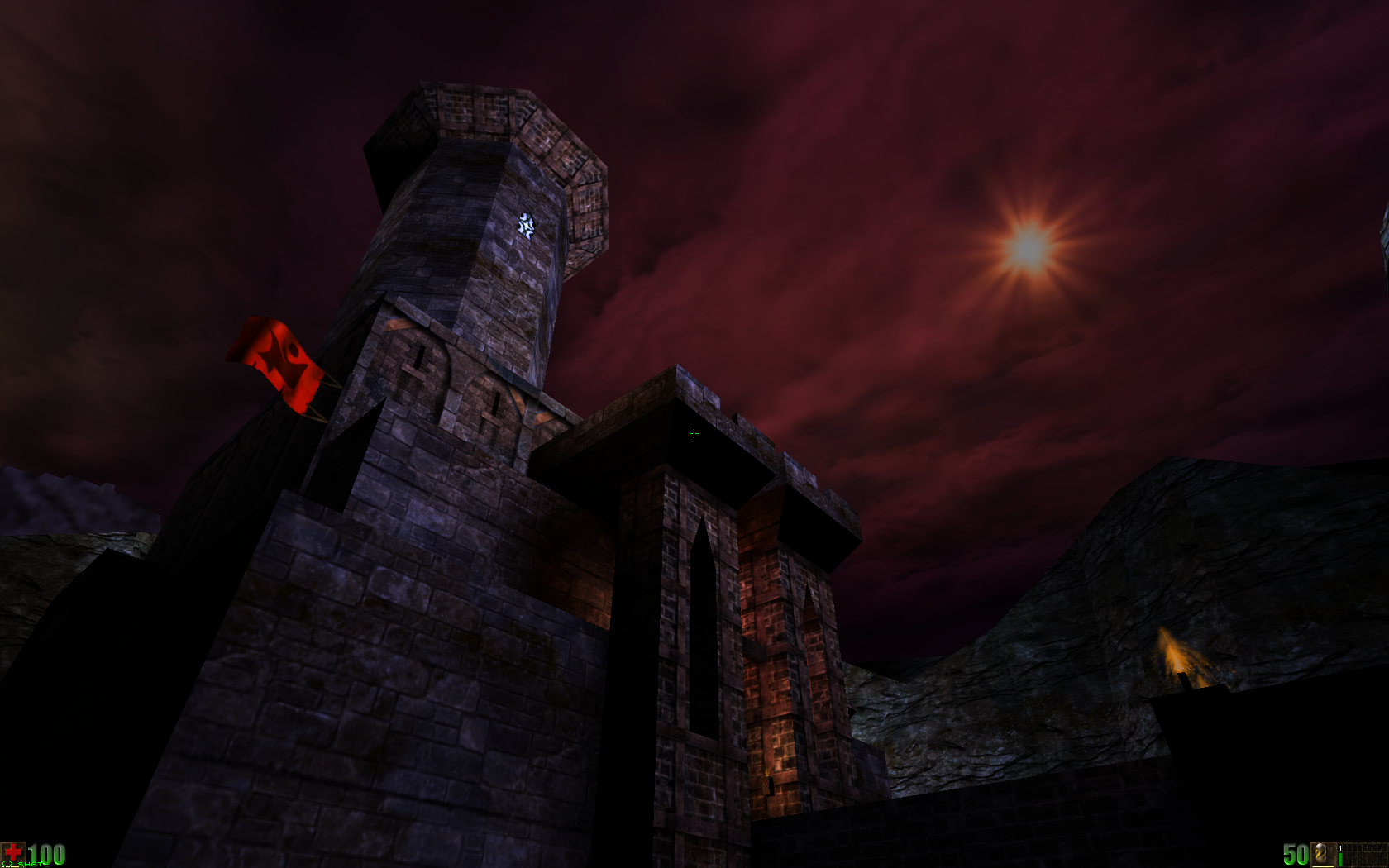

Otherwise here are some screenshots:

Note that the more saturated look by GlideDrv was caused by a combination of a slight output gamma "correction" (1.25 by default) and probably the pyramide scaling which was neither linear nor quadratic. Maybe even it didn't handle color saturation at all correct or consistent.

So much for the nostalgic how it was part. The really complicated things start when one wants to turn the gamma incorrect rendering in some gamma correct rendering to improve the odd lighting. Basically it involves three parts: Using correct gamma correction on output (based on monitor!), adjusting textures to contain or to be loaded as linear data (gamma correcton, removing the extreme contrast enhencements on mesh textures, etc.), and the lighting itsself.

While the first part is straight forward, the second part about adjusting resources will become much of work and also include to develop a toolchain for ease of converting existing resources (I currently have something in development for this task).

The really complicated part starts regarding the lighting. It basically splits into two issues. One needs to adjust the overal light levels, which is probably creating another drop off function for the light data itsself, but the more problem point is to keep the color saturation levels as this is heavily influenced by this adjustment.

So much for now.